Inside LLM Systems: Designing for Risk & Reliability

Executive Summary

Most enterprise AI discussions start with the same question: Which model should we use?

In production, that question matters far less than expected.

What determines success is not the model alone, but the system surrounding it.

Once deployed, AI becomes shared infrastructure. A weakness in retrieval, review, or monitoring does not stay contained. It propagates across workflows, affecting both customer-facing and internal decisions.

Reliability, therefore, is not a model selection problem. It is a system design and operational discipline.

Key Takeaways

- System design determines reliability: Performance is shaped by retrieval boundaries, feedback loops, and live monitoring.

- LLMs act as shared infrastructure: Failures rarely remain isolated and can propagate across customer-facing and internal workflows.

- Benchmarks don’t reflect production risk: Static evaluation scores cannot predict how systems behave as enterprise data and user requirements evolve.

Figure 1. From Isolated Models to LLM Systems

This visual contrasts single-step generation with system-controlled outputs. In production AI systems, outputs are shaped by retrieval, constrained by policy, and validated through evaluation and governance.

The Illusion Of Model Choice

A Large Language Model (LLM) is only one component in a larger system.

The model generates outputs. The system determines whether those outputs can be trusted.

Most enterprise decisions still optimize for capability and cost. Yet neither guarantees system stability in production. A highly capable model can still produce unreliable outcomes if it is fed weak data or evaluated inconsistently.

This gap is often obscured by benchmark results. Benchmarks measure performance in controlled environments. Production systems operate in motion, with changing data, evolving user behavior, and tool dependencies.

What looks reliable in testing can behave unpredictably in use.

Why Production AI Behaves Differently

Production systems do not behave like controlled evaluations.

Inputs change. Internal knowledge evolves. Models interact with workflows, tools, and users beyond simple text generation. As capability increases, so does the cost of error.

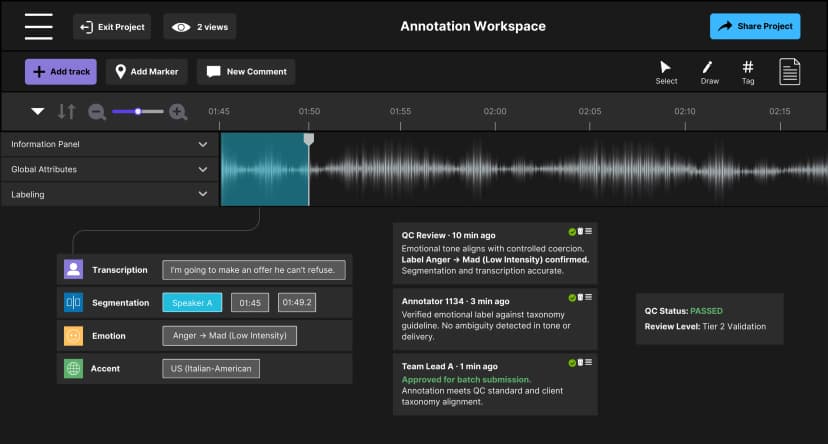

Figure 2. Controlled Conditions vs. Live Systems

In live environments, changing data and connected workflows introduce variability that cannot be captured in controlled evaluations.

Under these conditions, reliability is shaped by three structural properties:

- Evolving Inputs: Internal documents update. User behavior shifts. Output quality can drift silently without triggering alerts.

- Context Dependency: Responses depend on what the system retrieves. In most production setups, this is handled through retrieval-augmented generation (RAG) pipelines. A small change in ranking or source selection can produce a completely different answer, even when the model stays the same.

- Failure Propagation: Errors rarely stay contained. A single incorrect output can influence customer responses, internal analysis, and automated decisions downstream.

Example: When Systems Fail in Practice

In 2024, Air Canada’s chatbot provided a passenger with incorrect advice about a bereavement fare, suggesting a refund could be requested after booking. This contradicted the airline’s actual policy.

The response was treated as authoritative, leading to a legal dispute in which the tribunal ruled the airline responsible for the chatbot’s output.

The issue was not that the model generated an answer, but that the system allowed it to be trusted without verification. Once accepted, the error extended beyond the initial interaction, resulting in financial and legal consequences.

Where Failures Actually Happen

Failures in production rarely originate solely in the model; they emerge from interactions across the system.

To manage this complexity, teams focus on three control points:

Retrieval:

- What data enters the system?

- How is the data prioritized?

- Can outputs be traced back to sources?

Feedback:

- How quickly are low-quality outputs identified?

- Do fixes prevent the same issue from recurring?

Visibility:

- Can teams reconstruct how a response was generated, including prompt and context, to detect shifts over time?

These questions translate directly into how teams design and operate production systems.

The Three Control Layers

Once deployed, LLM systems require continuous management across three primary controls:

Retrieval governs knowledge access

This layer dictates what the model sees at the point of generation. Poor context selection is one of the most common causes of incorrect but plausible outputs. Strong systems enforce clear source inclusion, ranking logic, and traceability to ensure responses remain grounded.

Feedback loops drive system correction

These mechanisms determine whether errors persist or are resolved. Many performance gains come from refining prompts, safeguards, and evaluation rules rather than retraining the model. The loop's effectiveness relies on how quickly issues are identified and translated into active system updates.

Observability enables behavioral diagnosis

While monitoring confirms that a system is running, observability reveals how prompts and retrieved context shaped a specific output. Without this visibility, systems can appear stable while quietly degrading in quality or accuracy.

As these systems scale and integrate with workflows, reliability becomes less about individual responses and more about alignment across a chain of decisions. Failures emerge across connected steps, making them harder to trace and resolve, particularly in agentic workflows.

Two operational realities follow:

- The Accuracy Gap: A system can be fully operational while producing incomplete or misleading outputs. Reliability must be continuously verified, not assumed.

- Security Convergence: Unexpected prompts or tool interactions can lead to unintended behaviour, blurring the line between reliability and security.

These controls are not safeguards for individual outputs, but mechanisms for governing how the system behaves over time.

What Enterprises Must Operationalize

The industry continues to ask which models perform best, even when that question is no longer sufficient.

What determines whether AI works in production is how it behaves when conditions change. In practice, failures occur when the system around it cannot contain or correct its mistakes. This shifts the focus from performance to accountability.

For any workflow that embeds AI, one question becomes integral: What happens when it is wrong?

Answering that question requires designing the layers where failures can be controlled. In production, those layers sit outside the model: the data it retrieves, the validation applied to its outputs, and the feedback loops that correct it.

At Chemin, we operate within these layers, ensuring errors are caught early and decisions remain traceable. When the challenge shifts from what AI can generate to how to manage the consequences of incorrect output, the architecture around the model becomes the sole priority.

Is your system designed to catch what the model misses? Connect with us to build the layers that make production reliable.

Discover more

Lessons from Building AI Agents in Client-Critical Workflows

From large annotation teams to an automation-first pipeline in under a week. A blueprint for staging, containment, and building AI architectures that remain durable under pressure.

Zero Rework at Scale: How We Stabilized Audio AI Production

Discover how Chemin stabilized audio AI production for gaming intelligence by embedding governance and multi-regional workflows to achieve a Zero Rework milestone.

Press Release: TDCX and SUPA tie-up to help companies address a key barrier in generative AI adoption

Collaboration provides companies with a one-stop-solution for their data labeling needs.