Scaling to peak output: 294% growth for a premier gaming studio’s audio AI

629.5

hours of accepted audio in one month

294%

output growth from the previous month

99%

dataset accuracy; 12 of 27 batches hit 100%

USE CASE

Multilingual audio segmentation | Speech transcription | Conversational tagging

INDUSTRY

Interactive Entertainment | Conversational AI

SOLUTION

Data Stack

The mission: Scale from 160 to 629.5 audio hours without a rejected batch

This is the final piece in a three-part series on building and running an audio annotation pipeline for a premier gaming studio.

We began by structuring subjective audio data across emotion, accent, and demographic labeling. We hit 95% accuracy within the first 30 days. By January, every batch passed the client audit on the first try. With quality locked in, the focus shifted to volume.

Each month carried a higher output target, and our production grew accordingly:

- November: 50 hours

- December: 107 hours

- January: 140 hours (zero rework achieved)

- February: 160 hours (stability maintained during client-side review delays)

- March: 629.5 hours (peak output)

Zero rework means every batch cleared the client audit on the first submission. Accuracy stayed above 95% throughout.

March’s target of 290 hours, nearly twice our February delivery, and we finished the month at 629.5 hours. The gap between target and delivery came down to pace: the faster we completed each batch, the faster the client released additional volume. We were onboarding new annotator cohorts every week, running five external partners simultaneously, and processing multiple batches at once. By mid-March, the squads were clearing work faster than the original 290-hour target required, and the client expanded the scope to match.

The challenge: Doubling capacity without fracturing quality

Going into March, our 5 teams and just over 100 annotators ran the project. Each Team Lead managed one team and personally reviewed every task before client submission.

Our annotators had spent months learning to identify subtle emotional cues in monotonous speech, a skill that required repeated calibration on the client's specific taxonomy. Growing to 232 annotators meant Team Leads could no longer review every task directly. The risk was that new hires would not reach that same level of accuracy within the 3-day calibration window while active production continued.

Key pressure points:

- Training at speed: Training 132 new annotators without slowing active production.

- Review capacity: Maintaining effective quality control as active batches grew past 30.

- Operational load: Tracking and reporting at volume without adding manual overhead.

- Regional alignment: Holding consistent standards across Asia and LATAM.

The goal:

To hit the 290-hour target, we had to solve three variables simultaneously:

- Grow total output from 160 to 290 hours.

- Hold accuracy above 95%.

- Onboard 132 annotators without slowing active production.

The solution: Squads, regions, and automation

We believe scaling subjective audio annotation is an organizational design problem before it is a tooling problem. Automation reduces manual overhead, but it cannot teach a new annotator to identify subtle emotional cues in monotonous speech. That requires a lead who has spent months on the client taxonomy and a structure that keeps that lead close enough to new hires to catch errors before they reach the client.

Squad structure: one lead, four teams

We shifted our organizational structure from a 1:1 to a 1:4 lead-to-team ratio. We paired each Team Lead with a support annotator to manage daily operations for their existing team. This allowed the Lead to form a new team every two weeks. Every new hire was trained by a lead who had spent months calibrating on the client's emotional-cue taxonomy.

Synchronizing Asia and LATAM streams

Our LATAM team ran a second production stream using the same working sheets, project trackers, and quality standards as our Asia-based squads. This alignment ensured work progressed even when one region was offline. Our weekly throughput grew from 6 batches in February to 14 in March.

Automation across three manual bottlenecks

With over 30 active batches, manual tracking was slowing throughput. We automated three processes:

- Applicant screening: Incoming annotators were filtered based on language test scores, removing the need to review hundreds of applications by hand.

- Timesheet processing: Converting task logs into payment sheets dropped from 30 minutes to under 10 minutes per cycle.

- Report consolidation: Client reports were compiled automatically, eliminating data entry errors that previously delayed invoicing.

The approach: Holding 99% accuracy while the workforce doubled

Screening annotators before day one

New annotators completed language and rule tests before their first session. They reviewed video tutorials and pre-read materials on segmentation and transcription before live training began. Our live sessions focused entirely on emotion labeling, expectations, and the client's accent taxonomy.

Catching errors before they reach the client

For the first two to three batches, our QC members returned every task submitted by a new annotator, providing specific feedback on emotion labels, segmentation calls, and accent classifications. Annotators corrected and resubmitted until the same errors stopped reappearing.

Experienced annotators worked under a different protocol. Our QC members corrected the remaining errors directly and submitted without sending work back. Our high-performing reviewers also ran annotation tasks themselves, eliminating the need for a separate review pass.

Nothing reached the client without first being cleared by an experienced reviewer. New hires could not submit directly.

The results: 629.5 hours delivered, 0 batches rejected

Output grew 294% from February. Accuracy held above 95% throughout March, with 12 of 27 batches hitting 100%. The internal and LATAM teams kept their zero-rework streak intact for three consecutive months.

Three decisions kept per-batch costs from rising with output:

- Onboarding tasks were submitted to the client with review coverage to keep training costs low.

- High-performing reviewers handled annotation work directly, removing the need for a second review layer.

- Automation reduced the time cost per batch.

Annotation pipelines that break at scale typically do so because quality ownership spreads too thin as headcount grows. Keeping every submission under an experienced reviewer, regardless of team size, kept accuracy above 95% during a month when the workforce more than doubled. The squad structure, pre-training filters, and automated tracking we built here carry into the next project.

From first batch to full scale, quality comes first

We turned a stable pipeline into peak production without losing accuracy, even as the workforce more than doubled.

More stories

Solving language blind spots with culturally fluent AI

Engaged multilingual Asian talents to capture local language and cultural nuance, accelerating high-accuracy training for an AI communication model.

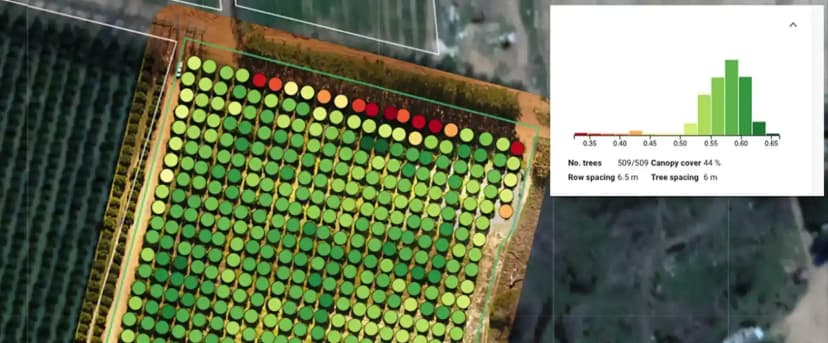

Driving rapid throughput of validated data to power global agri-tech

Designed an extensible AI annotation verification infrastructure for the client's data labeling team to meet high demands and seasonal surges.

Enhancing high-stakes autonomous systems with contextual fidelity

Enabled precise and context-appropriate recognition in an autonomous driving system through custom data labeling and AI annotation process.