Before You Scale AI Agents: 5 Lessons From Real Workflows

Executive Summary

- Reliability starts with clarity: AI agents only perform as well as the workflows they run on. Hidden steps, decisions, and edge cases must be made explicit.

- Structure makes work executable: The GDP framework (Goal, Data, Process) turns vague tasks into systems that can run consistently.

- Control is built into the workflow: Guardrails, validation, and failure handling must be embedded from the start, or errors will repeat at scale.

- Adoption determines scale: Start with simple, high-impact workflows. Early wins build trust and shape how teams use automation.

- Human work shifts to judgment: Automation reduces execution and coordination work. The remaining human role is to define quality, review edge cases, and handle exceptions.

What Changes When Agents Go Live

A renovation quote used to take one to two weeks. By the time it was ready, the customer had often moved on to a competitor.

One contractor changed that by building an AI agent that generates quotes during the initial conversation. The customer selects materials, reviews options, and receives a final number before the meeting ends.

Instead of waiting days for a quote and deciding separately, the customer now evaluates options and commits in the same sitting. The decision point moves from after the meeting to inside it.

Jonathan Chu, who leads operations and delivery at Chemin, works with teams to translate ideas into AI workflows that run in real conditions.

Most teams focus on building AI agents. The real challenge begins when those agents run inside workflows where inputs are messy, and decisions are undefined.

Getting Definitions Right In AI Workflows

Before moving into the lessons, it helps to define how these systems behave in practice.

Concept | Definition | Example |

Automation | Follows predefined rules without deviation. | Receive email → save attachment → rename file → send notification |

AI Agent | Can think, decide, and act toward a goal. | Reads inbox → summarizes threads → suggests replies → flags for review |

AI Automation | Combines rules and decision-making. | Classifies email → extracts data → drafts reply → logs into CRM |

Every AI agent workflow relies on three core components:

- Brain: The model that handles reasoning.

- Memory: What the system retains across interactions.

- Tools: Integrations that allow actions like using APIs or spreadsheets.

Knowing when to use memory matters. A chatbot needs it for context, while a structured workflow often doesn't. Adding memory where it’s not needed increases complexity without improving outcomes.

"The tools are easier now. The thinking is not."

Lesson 1: Codify Intuition Or Expect Failure

Most workflows look simple until you try to automate them.

Take a task described as "research, write, and submit." Beneath that phrase are hidden decision layers. Someone has to decide what counts as a reliable source, filter weak inputs, and check whether the output meets the standard.

Humans perform these steps implicitly. AI agents require explicit instructions.

"People think the task is simple. What’s missing is everything in between.”— Jonathan Chu, Operations & Delivery Lead

Skipping this leads to inconsistent outputs because the system fills in missing logic. This is why workflows that appear to work during testing break under real conditions.

A useful test: ask whether a new hire could follow the workflow without asking a single question. If the answer is no, the workflow isn't ready.

Lesson 2: The GDP Framework Turns Ideas Into Systems

Most delays come from starting without structure.

The GDP framework forces clarity before any development begins. It breaks work into three parts:

- Goal: The exact outcome required, starting with an action verb like generate or notify.

- Data: The inputs already available, what needs to be collected, and the constraints.

- Process: How the data transforms step-by-step.

Figure 1. Example of how the GDP framework translates a task into a structured workflow.

Vague instructions like “generate a report” leave too much open to interpretation. A defined goal, such as extracting validated inputs to produce a structured summary, gives the workflow something it can execute.

Even small details in the process layer matter. For instance, an agent must know whether to replace existing data in a spreadsheet or add a new entry to the bottom of the file. These two instructions result in different behaviors. Without this level of specificity, the system behaves unpredictably.

“The main cost is time. You lose time when you don’t know exactly what you’re building.”

Teams that define these elements up front spend less time debugging and produce more consistent outputs.

Lesson 3: Design Guardrails To Manage Scaled Failure

A single mistake repeated across thousands of tasks is a system-level failure.

Guardrails control what an agent can do at each stage. Embedding them from the start is part of the workflow design.

In delivery-focused workflows, this plays out across four stages.

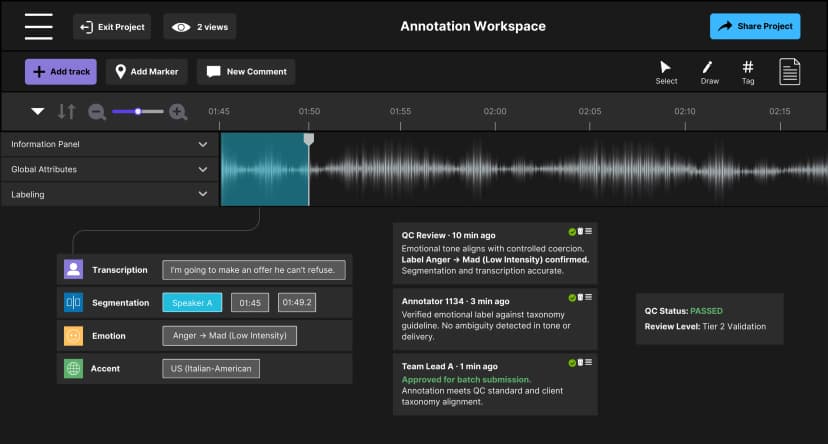

Figure 2. Guardrails are embedded across hiring, training, execution, and quality control.

This four-stage structure shaped how the delivery team built an annotator database.

It tracks each contributor’s experience and accuracy over time. When a client asks how quickly a team can reach 95% accuracy, the answer becomes a data-backed projection rather than a general estimate.

Red-teaming and blue-teaming keep guardrails effective as workflows scale.

Red-teaming deliberately tries to break the system by submitting incomplete data, introducing formatting errors, and testing undefined edge cases. These failures are surfaced before they repeat in production.

Blue-teaming maps where bad data or incorrect decisions can enter the workflow, and builds restrictions around those points. In delivery, this can mean tightening how task assignments trigger or defining what happens when an accuracy score drops below a threshold.

Both run continuously. As volume grows, new failure patterns emerge. Each one becomes a signal that gets built back into the system.

Lesson 4: Prioritize Adoption To Ensure Scaling

Adoption is the main constraint when scaling AI agents. Many teams build a workflow, see limited returns, and stop.

The first system didn’t deliver enough value, so the next one never got built.

“You don’t start with the biggest problem. You start with the one you understand.”

A practical way to prioritize is a difficulty-versus-impact lens. Start with tasks that are easy to execute and produce visible results.

Figure 4. Prioritizing AI workflows using a difficulty versus impact matrix.

Start with low-difficulty, high-impact workflows to build momentum.

For individuals, this often comes from daily repetitive work: sending the same message to a group, organizing incoming requests, or summarizing repeated inputs.

For teams, scope matters. Keeping workflows small and involving the people doing the work makes them easier to test and improve.

Momentum comes from completed workflows. Once a workflow runs reliably and saves measurable time, the next one is much easier to justify.

Lesson 5: Shift Human Focus Toward Judgment

Automation changes how work is done, and people remain central to it.

Once execution is automated, human value concentrates in three places:

- Defining what "correct" looks like.

- Reviewing edge cases that the system can't resolve.

- Deciding when to intervene.

These roles require judgment over execution.

This shift is visible in delivery work. Automation handles repetitive coordination, freeing teams to focus on more complex problems. One example is building a retrieval-augmented generation (RAG) tool that helps annotators find answers in long instruction documents without escalation.

In practice, this shows up when teams stop fixing individual outputs and start adjusting the workflow itself.

Over time, teams move from task execution to tracking how data flows, where decisions are made, and where breakdowns occur.

The data annotation industry is increasingly commoditized. As models improve, basic labeling work becomes easier to replicate. Similar tasks can now be completed by more teams with comparable outputs. Teams that stay competitive rely on domain expertise, accurate delivery projections, and well-designed workflows. Automation creates the capacity to develop that.

Non-technical professionals now play a direct role in building and maintaining these systems.

The ability to design and run AI agent workflows is becoming a core skill across roles. As AI adoption deepens across industries, the people who understand and improve workflows will shape how AI systems perform.

Clear Thinking Over Better Models

These lessons point to the same conclusion: reliable AI systems depend less on the model and more on how the work around it is defined, structured, and adopted.

For enterprise leaders, one element ties everything together, and it’s often underestimated: culture.

Teams are already stretched. Adding experimentation to delivery work feels like extra work, so it gets deprioritized.

What changes this is sequencing. Start by showing where things are heading and what skills matter next. When teams see the direction, they’re more willing to experiment.

Leaders who scale AI effectively tend to focus on three things:

- Creating space for experimentation alongside delivery work.

- Keeping projects small and time-bound so teams can learn and iterate.

- Involving teams in deciding what gets automated.

When people have a hand in shaping the direction, adoption follows naturally.

The renovation contractor succeeded because every decision was defined before the agent ran. The agent didn’t just generate a quote faster; it captured the decision while the customer was still engaged. That level of clarity is what makes automation hold at scale.

Define the workflow. Build the culture around it. Then scale.

Ready to put this into practice? If your workflows produce inconsistent outputs as volume grows, talk to our team about turning them into systems that scale with control.

Discover more

Lessons from Building AI Agents in Client-Critical Workflows

From large annotation teams to an automation-first pipeline in under a week. A blueprint for staging, containment, and building AI architectures that remain durable under pressure.

Zero Rework at Scale: How We Stabilized Audio AI Production

Discover how Chemin stabilized audio AI production for gaming intelligence by embedding governance and multi-regional workflows to achieve a Zero Rework milestone.

Inside LLM Systems: Designing for Risk & Reliability

An inside look at LLM systems, focusing on how system design, feedback loops, and observability influence risk and reliability over time.