Careers in AI Aren’t Just for Engineers: How Operations Teams Redesign AI Workflows

From Delivery to Systems Thinking

Most AI teams treat automation as a tooling problem: pick the right platform, connect the right APIs, and the workflow runs itself. In AI operations, where data labeling, annotation pipelines, and payment processes must execute consistently across hundreds of contributors, the bottleneck is rarely the tool. It's the workflow itself.

When processes depend on implicit handoffs, undocumented steps, and individual judgment calls, they work at low volume. At scale, they fragment. Errors compound. Quality becomes unpredictable.

In Part 1, we established that AI performance depends on delivery, a repeatable framework of people and processes that keeps quality stable. This article examines what comes next: redesigning how that work operates so it can be automated without breaking.

At Chemin, this shift is led by the operations team focused on system design.

"I design systems so people don't have to do repetitive work manually,” says Sunnie Loh, Operations Automation Lead. "The process has to be clearly defined first. You can't automate what you haven't structured."

Scaling delivery requires structured workflows that can operate consistently under pressure. Teams that scale successfully codify their processes and build systems around them.

The Breaking Point of Manual Work

Workflows often look manageable until volume increases. The same steps that worked before begin to slow down, require more coordination, and produce inconsistent results.

Operational friction follows a consistent pattern:

- Volume surge: Incoming data or tasks exceed human processing capacity.

- Static processes: Work methods remain manual as complexity grows.

- Accuracy erosion: Repeated handling introduces inconsistency.

- Sustainability gap: Manual execution becomes too costly to maintain.

A concrete example shows how this plays out. Consolidating over 20 separate project timesheets previously required approximately 3 hours of manual work, with data copied and verified across multiple files. With automation, this was reduced to under 5 minutes.

Figure 1. Timesheet Consolidation: Manual vs Automated

Manual consolidation introduces fragmentation and inconsistency. Automation creates a single, consistent data flow.

This shift raises a crucial question: What needs to be defined before automation can work reliably?

The Methodology: System Thinking Before Automation

Automation is often approached through tools, with the expectation that AI can interpret incomplete instructions and fill in missing steps. Teams identify a problem, select a tool, and begin building. When the workflow is not clearly defined, the system produces inconsistent outputs that are difficult to verify.

“Clarity in the process matters most. If the process is unclear, the automation will fail,” says Sunnie.

In AI operations, the constraint is repeatability. Systems execute the same logic across every input. Any undefined step is repeated at scale. Before automation, the workflow must be structured so that each step runs consistently.

In practice, this means defining the workflow across four components, each of which determines whether the system can scale reliably.

Input

Where the data originates, what format it takes, and what metadata travels with it.

In annotation workflows, this includes the labeling schema, edge-case definitions, and source data provenance. An automated system that doesn't validate inputs will scale with inconsistencies.

Process

Mapping the precise, step-by-step transformation of the data.

This is where most workflows are under-defined. Teams know the desired output, but the intermediate steps, including task assignment, exception handling, and quality control sequencing, are often carried out in someone's head rather than encoded in a system.

Output

The exact result the system must produce is clearly defined enough to verify.

In data delivery, this means specifying not just the deliverable format but the acceptance criteria: completeness, consistency, and schema compliance. Without this, validation cannot be automated, and quality becomes subjective.

Data storage

Where information is held to ensure consistency and reuse.

Workflows that store intermediate results across disconnected spreadsheets and email threads cannot be automated reliably. Centralizing data storage is often the precondition for everything else.

Mapping these components exposes where workflows rely on manual intervention or assumed knowledge. Once defined, automation can be applied precisely. Repetitive, time‑consuming, or error‑prone tasks are automated, while judgment-based decisions remain human.

A second constraint appears at scale: reusability.

Workflows built for a single use case often require rebuilding when conditions change. Without a structure, automation fragments rather than scales. The objective is to design workflows that can be reused across contexts and maintained over time.

Case Study: What Actually Breaks (and What Gets Fixed)

A workflow rarely breaks all at once. It breaks at the points where one task hands off to the next.

In the external annotator payment process, those transitions required constant intervention. Data entered through separate forms, moved through email threads, and was consolidated across multiple files before it could be used. Each stage relied on someone to verify or reconstruct information before it could continue.

The issue was not the number of steps, but how those steps interacted.

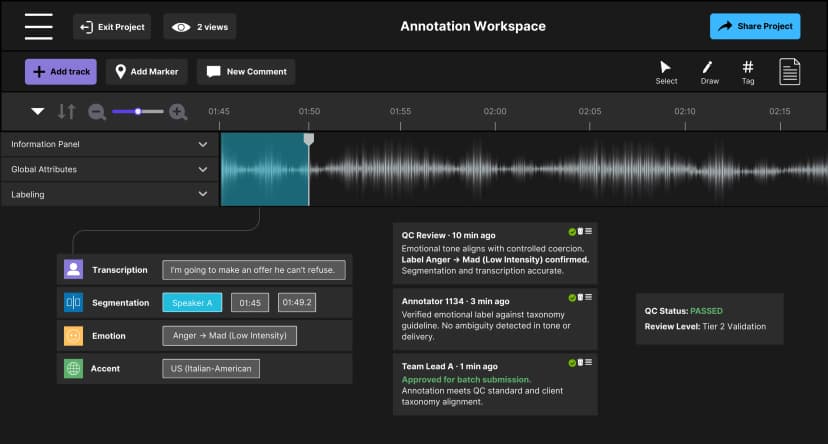

Figure 2. External Annotator Payment Workflow (Before Automation)

The workflow relied on manual handoffs between disconnected steps. A single missing detail can stall payment for weeks. Effort scales faster than output.

The workflow relied on manual handoffs between disconnected steps. A single missing detail can stall payment for weeks. Effort scales faster than output.

Data moved without continuity. Each transition introduced delay and variability, causing errors to compound across stages.

This shift matters beyond internal efficiency. In annotation operations, payment reliability directly affects workforce retention. Delays or inaccuracies increase churn, forcing teams to recruit and onboard replacements.

“If payments are always delayed or wrong, annotators stop wanting to work with us,” says Sunnie. “Then we have to hire more people, retrain them, and projects slow down. Data quality drops because the people who understood the work are no longer there.”

This is why Chemin treats operational infrastructure as a delivery problem rather than a back-office function. The systems that pay annotators, track hours, and manage onboarding sit on the same reliability chain as the data itself. When the operational layer breaks, the data layer reflects it.

The pressure to automate became urgent as the annotator base scaled 7 times within 3 months. What once worked at low volume began to fragment under pressure.

“If we still used the old process, it simply wouldn’t scale. Now we can handle that growth with the same human resources.”

Once the workflow was mapped end-to-end, each transition was evaluated based on how data should move. Validation was applied at entry, removing the need for repeated checks. Data feeds directly into a central structure, eliminating downstream reconstruction. Timesheet consolidation and calculation run automatically. The approval chain was reduced to a single audit step, where human input remains necessary.

Figure 3. External Annotator Payment Workflow (After Automation)

Connected workflow with validation at entry. Downstream rework is removed, and human input is limited to judgment. This workflow can take on more work without added complexity.

The system moves through structure, not coordination. Data flows through a defined path, and human intervention is applied only where judgment is required.

The Next Layer: From Scalability to Reliability

For enterprise teams, automation reduces operational costs associated with manual processes and restores capacity for higher-value work. Reducing a task from 3 hours to 5 minutes allows project managers to focus on client relationships and strategic planning.

In the external annotator workflow, the system absorbed a 7x increase in volume without increasing headcount. This was achieved by structuring the workflow to operate consistently as demand grew.

Payment reliability became a trust layer. When annotators are paid accurately and on time, they continue contributing. When they are not, they leave. This introduces churn, delays delivery, and increases hiring overhead.

Many AI deployments fail here. Systems are scaled before they are structured. As volume increases, errors spread and quality declines. The constraint is not the model, but whether the system can maintain consistency under changing conditions.

At Chemin, this translates into a simple operating principle: automate where consistency matters, retain humans where judgment is required. Redesigning workflows is how we ensure growth doesn’t break our delivery.

AI reliability is determined by the systems built around it. Delivery stabilizes execution, and system design and automation enable scale. The next layer, quality control, will determine whether that scale remains accountable.

If you're building AI systems and thinking about the operational infrastructure behind them, learn more about partnering with Chemin.

If this kind of systems thinking is how you work, explore open roles on our team. We’re looking for people who see operations as a design problem.

Discover more

Lessons from Building AI Agents in Client-Critical Workflows

From large annotation teams to an automation-first pipeline in under a week. A blueprint for staging, containment, and building AI architectures that remain durable under pressure.

Zero Rework at Scale: How We Stabilized Audio AI Production

Discover how Chemin stabilized audio AI production for gaming intelligence by embedding governance and multi-regional workflows to achieve a Zero Rework milestone.

Inside LLM Systems: Designing for Risk & Reliability

An inside look at LLM systems, focusing on how system design, feedback loops, and observability influence risk and reliability over time.